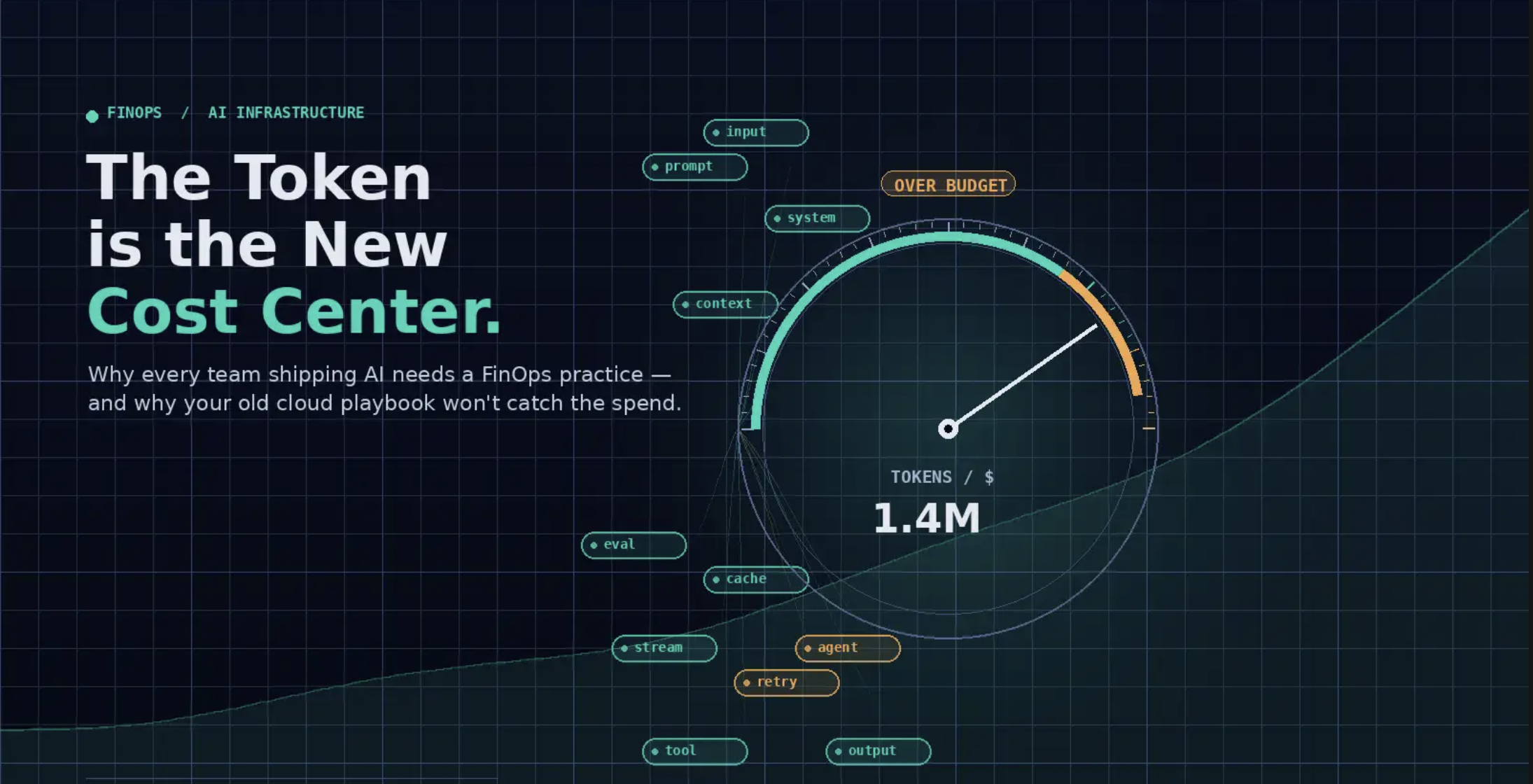

The Token Is the New Cost Center: Why FinOps Has to Apply to AI Tokens

For fifteen years, FinOps has been a discipline built around a fairly stable physics: you provision resources, the resources have a price, and the price is roughly knowable in advance. A c6i.4xlarge costs what it costs. An S3 GET is fractions of a cent. The hard parts of FinOps were attribution and discipline, not arithmetic.

AI tokens broke that physics.

If you're shipping anything backed by an LLM — a copilot, a search experience, an internal agent, a customer-facing assistant — you have a new line item that doesn't behave like the rest of your infrastructure. It's volatile, it's user-driven, it's poorly tagged at the source, and it scales with success in ways that can quietly destroy a gross margin. The FinOps Foundation now treats this as its own scope of practice, and the FinOps for AI Overview explicitly calls out tokens as the primary cost meter for AI usage.

Here's the part that should make every founder and engineering leader pay attention: the maturity gap between teams that are doing FinOps for tokens and teams that aren't is going to show up in the P&L within a quarter or two. Not eventually. Soon.

Why tokens are different

A traditional cloud workload has a cost shape. You can look at a service and say, "this runs on three replicas, each provisioned at this size, consuming this much memory, ninety-fifth percentile latency is here." From there, cost is mostly a math problem.

Token cost is not a math problem. It's a behavioral one.

The same prompt, issued by two different users, can produce wildly different invoices. Context window length varies. Retry loops vary. Tool-calling depth varies. Whether the model decides to chain three calls or thirty varies. Whether you're hitting a cache or paying full freight varies. The "resource" being consumed is an API call whose size is determined at runtime by a non-deterministic system reacting to user input.

That has four immediate consequences your old FinOps tooling wasn't built for:

1. Inputs and outputs are priced separately, and they don't move together. A user who asks a one-line question can trigger a 4,000-token response. A user who pastes a 50,000-token document can get a one-paragraph answer. Your cost-per-query distribution is not normal — it's heavy-tailed. Averages lie.

2. The cost driver lives in user behavior, not infrastructure config. You can't right-size your way out of a power user who runs an agent in a loop overnight. The lever isn't instance type. It's prompt design, model routing, caching, and rate limits.

3. Provider invoices are flat. Most LLM providers don't expose tag-based cost attribution the way AWS, GCP, and Azure do. A token spent by your platform team's coding agent looks identical on the invoice to a token spent by your customer-facing product. As the FinOps for AI Agents allocation framework puts it, this is a structural gap, not a configuration gap. You have to build the attribution layer yourself, in front of the provider.

4. Pricing itself is unstable. Models get deprecated. New variants ship monthly. The price-per-million-tokens for a given capability tier is dropping over time on average, but individual model upgrades can double a line item overnight. Your forecast model needs to assume the underlying unit cost is a moving target.

Put those four together and you get the central FinOps-for-tokens problem: you cannot manage what you cannot see, and what you need to see is not what your provider is showing you.

What "FinOps for tokens" actually means in practice

Strip away the framework language and the work breaks into three things, in order: visibility, attribution, and unit economics. You can't skip ahead. Teams that try to optimize before they can attribute end up tuning the wrong knobs.

Visibility: log every call, in your own system

The first move is operational, not financial. Every model call your application makes — every one — needs to be logged in a system you control, with at minimum: timestamp, calling service, user or tenant ID, model, input tokens, output tokens, cached tokens, latency, and success/failure. Provider dashboards are not enough. They tell you what you spent in aggregate; they don't tell you who spent it or why.

The cleanest pattern is a gateway: every call to OpenAI, Anthropic, Bedrock, your self-hosted model — anything — passes through a single proxy that does the logging. That proxy becomes your source of truth for usage data. It's also where you'll later add caching, routing, rate limits, and PII handling, but visibility is the table-stakes use case. A proper AI gateway gives you a single pane of glass for token tracking across providers.

If you're running multi-provider, this is non-negotiable. You will not stitch together attribution from CSVs at month-end. Don't try.

Attribution: tag at call time, not at month-end

Once every call passes through your own logging, you can attach the metadata that providers don't give you. The dimensions that actually matter for AI spend:

- Tenant — which customer triggered the call

- Feature — which product surface (the summarizer? the search box? the agent?)

- Environment — prod, staging, eval, internal tooling

- Workflow stage — initial prompt, retry, tool call, evaluation

- Cost center — which team owns the resulting spend

The reason to tag at call time, in code, is that this information is irretrievable later. Once a request is in the provider's billing system, all you have is a model name and a timestamp. The taxonomy lives at your gateway or it doesn't live at all.

This is also where shared spend gets tricky. A coding assistant used by an internal platform team and a coding assistant used by a product team produce identical invoice lines but represent very different accounting treatments — one is internal productivity, one is COGS. You need a back-allocation rule, applied retrospectively from your own taxonomy, not the provider's.

Unit economics: cost per outcome, not cost per token

Once you can see and attribute spend, the real question shows up: what is your cost per unit of value delivered?

For a B2B SaaS company shipping AI features, that usually means:

- Cost per active user per month

- Cost per resolved support ticket / generated document / completed workflow

- Cost per customer (especially the top-decile heavy users — they're often where margin goes to die)

- Cost as a percentage of revenue per feature

The KPI to watch isn't aggregate spend. It's cost per outcome, trending over time. If your usage doubles and your cost per resolved ticket stays flat, you're scaling well. If your cost per resolved ticket is climbing, something is wrong — the model is getting more expensive, your prompts are getting more bloated, or your power users are finding new ways to drive context length up.

This is the level at which FinOps for tokens earns its place at the leadership table. Aggregate spend is a CFO conversation. Cost per outcome is a product and pricing conversation, and it's the one that determines whether the AI feature you just shipped is a margin contributor or a slow leak.

The optimization moves, in priority order

Once visibility and attribution are real, the optimization playbook is reasonably well understood. Roughly in order of impact:

Route to the cheapest sufficient model. Most prompts don't need your best model. A small classifier in front of the gateway, picking between a fast/cheap model and a smart/expensive one based on prompt characteristics, is often a 40–70% cost reduction with no perceptible quality drop. This is the single highest-leverage change for most teams.

Cache aggressively. Prompt caching, response caching for idempotent calls, embedding caching for retrieval. Cached tokens are typically priced at a fraction of fresh tokens. For agent workflows that re-send the same system prompt and context across every step, caching alone can cut bills by half or more.

Trim the context window. The default behavior for most RAG and agent systems is to stuff context until it almost overflows. That's an unforced error. Retrieval quality saturates well before the context limit, and every extra token is paying for itself on input on every call.

Set hard budgets per tenant and per feature. Not soft alerts — actual rate limits and spend caps enforced at the gateway. Anomalies happen. A buggy retry loop, a malicious prompt, a runaway agent. Without enforcement, your worst-case scenario is unbounded.

Negotiate committed spend once you know your floor. This one comes last on purpose. You cannot intelligently commit to volume until you have at least two months of clean attribution data. Committing early is how you end up paying for capacity you don't use, on a model you've since deprecated.

Where this shows up in your business

The teams treating FinOps for tokens as a real practice — not a dashboard, a practice — are doing it because they figured out something the laggards haven't: AI feature pricing decisions, customer profitability analysis, and platform architecture choices all depend on knowing your token unit economics. If you don't know what an active user costs you in tokens, you can't price the product, you can't decide which customers to invest in, and you can't tell engineering whether the next architectural choice is going to pay for itself.

This isn't a finance function bolted onto an engineering org. It's a shared discipline, the same way cloud FinOps had to become one a decade ago. The teams that get it right will have a real advantage — not because they spend less, but because they'll know what they're spending and why, while their competitors are still squinting at provider dashboards trying to figure out where last month's bill went.

The token is the new cost center. Treat it like one.

If you're building AI features and want to stop guessing at your token economics, Behest gives you a unified AI backend deployed in your own cloud — including the gateway, attribution, and observability layer this post is describing. We'd love to hear what you're seeing in your own stack.