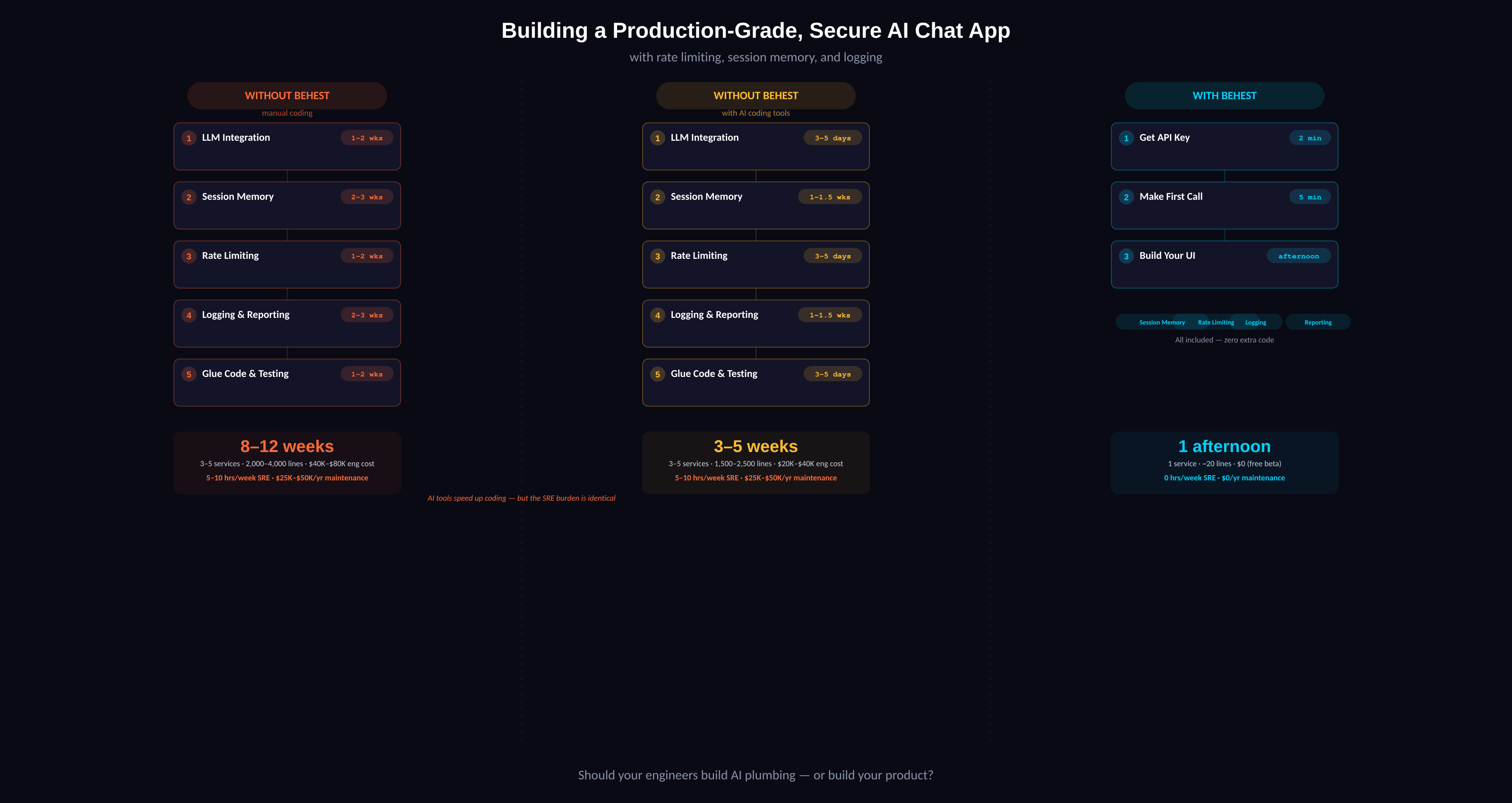

Building a Production-Grade, Secure AI Chat App: With Behest vs. Without

You want to ship an AI-powered chat feature in your product. Users need to have conversations with an AI assistant, and you need it production-ready — with rate limiting, session memory, and logging/reporting.

Here's what that actually looks like.

Without Behest

With AI Coding Tools (Claude Code, Cursor): 3–5 weeks

Step 1: LLM Integration (3–5 days)

Pick a provider. AI tools can scaffold the wrappers fast, but you still need to decide on retry logic, streaming strategy, fallback providers, and error handling patterns. Cursor won't make those architectural calls for you.

Step 2: Session Memory (1–1.5 weeks)

Claude Code can generate the Redis/Postgres schema and retrieval logic quickly. But you still need to design the context window strategy, test token limit edge cases, decide on TTL policies, and handle the "user has 500 messages" scenario. The decisions take longer than the code.

Step 3: Rate Limiting (3–5 days)

AI tools write the sliding window algorithm in minutes. But you still need to configure per-user vs per-org limits, handle burst scenarios, decide on rate limit headers, and test concurrent edge cases.

Step 4: Logging & Reporting (1–1.5 weeks)

The ELK/Datadog integration code writes fast with AI help. Structuring the log schema, building useful dashboards, and setting up meaningful alerts still takes a full week of iteration.

Step 5: Glue Code & Testing (3–5 days)

AI tools can help write integration tests, but you still need to manually verify failure modes across 4–5 services and load test the whole system.

Total: 3–5 weeks. 3–5 services. ~1,500–2,500 lines of infrastructure code.

Without AI Coding Tools: 8–12 weeks

Same steps, but every line is hand-written. API wrappers, Redis integration, rate limiter middleware, logging pipeline, dashboards, glue code, integration tests — all manual.

Total: 8–12 weeks. 3–5 services. ~2,000–4,000 lines of infrastructure code.

The Part Nobody Talks About: Ongoing SRE

Regardless of whether you used AI tools or not, you now **own** 3–5 production services. That means:

- 5–10 hours/week of ongoing maintenance — patching, monitoring, scaling, debugging

- On-call rotations — when Redis goes down at 3am, that's your problem

- Annual SRE cost: $25K–$50K in engineer time just to keep the lights on

- Every provider update breaks something — new API versions, deprecated endpoints, rate limit changes

With Behest: 1 afternoon

Step 1: Get your API key (2 minutes)

Sign up at dashboard.behest.ai. Copy your API key.

Step 2: Make your first call (5 minutes)

bash:

-H "Authorization: Bearer $YOUR_KEY" \

-d '{

"model": "gemini-2.5-flash",

"session_id": "user-123-session-abc",

"system_prompt": "You are a helpful product assistant.",

"messages": [{"role": "user", "content": "How do I reset my password?"}]

}'

That's it. One call. Here's what happened behind the scenes:

- Session memory: Behest stored the conversation. Next request with the same `session_id` automatically includes full context.

- Rate limiting: Built in. Your user is already being throttled at the plan's configured limits. 429s are returned automatically.

- Logging & reporting: Every request is logged — model, tokens, latency, session, user. Visible in your dashboard.

Step 3: Build your UI (the rest of the afternoon)

Total: 1 afternoon. 1 service. ~20 lines of integration code.

Ongoing SRE: Zero

The question isn't whether you *can* build all this yourself. You can — and AI coding tools make it faster than ever. But faster to build still means 3–5 services to own, 5–10 hours a week to maintain, and a 3am pager that never stops buzzing.

The real question is: should your engineers be building AI plumbing, or building your product?

Try the beta now: [dashboard.behest.ai](https://dashboard.behest.ai)